Business Rules transformations in SAS® Data Integration Studio cannot display an object with a minor version number greater than 99: C5F001: 63224: The CONTAINS operator on the 'Filter type' menu for the View Data window in SAS® Data Integration Studio incorrectly behaves as a LIKE operator: C5F001: 63434.

Sas Data Integration Studio Download Free

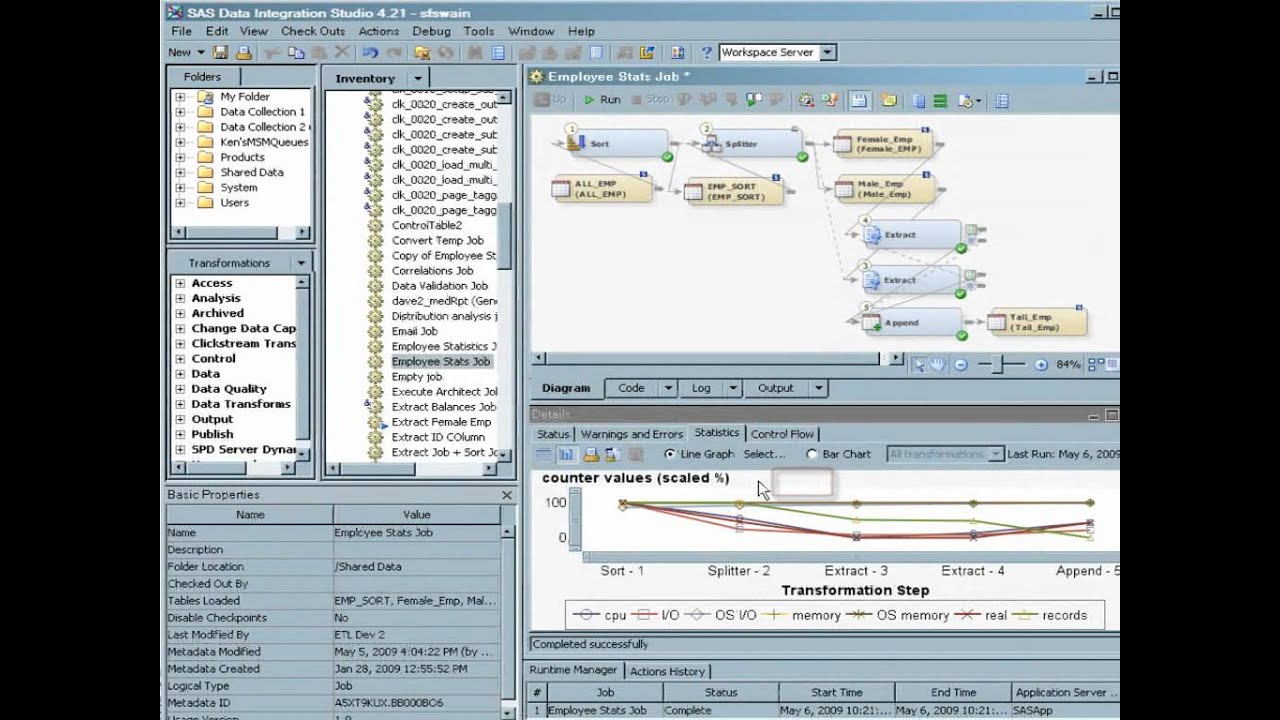

Sas Data Integration Studio Tutorial